Machine Perception

Teaching machines to see, hear, and interpret the visual and acoustic world.

The Machine Perception Group develops algorithms and methods that allow computers to reach the remarkable performance of humans, and in some cases even surpass human ability — across vision, video, audio, and 3D scene understanding.

Labs in Machine Perception

Hoshen Lab — Prof. Yedid Hoshen

Research focuses on deep learning for computer vision, self-supervised learning, and anomaly detection. The lab develops methods for visual synthesis, weight space learning on public model repositories, and generative AI for image and video understanding.

Lab Website ↗

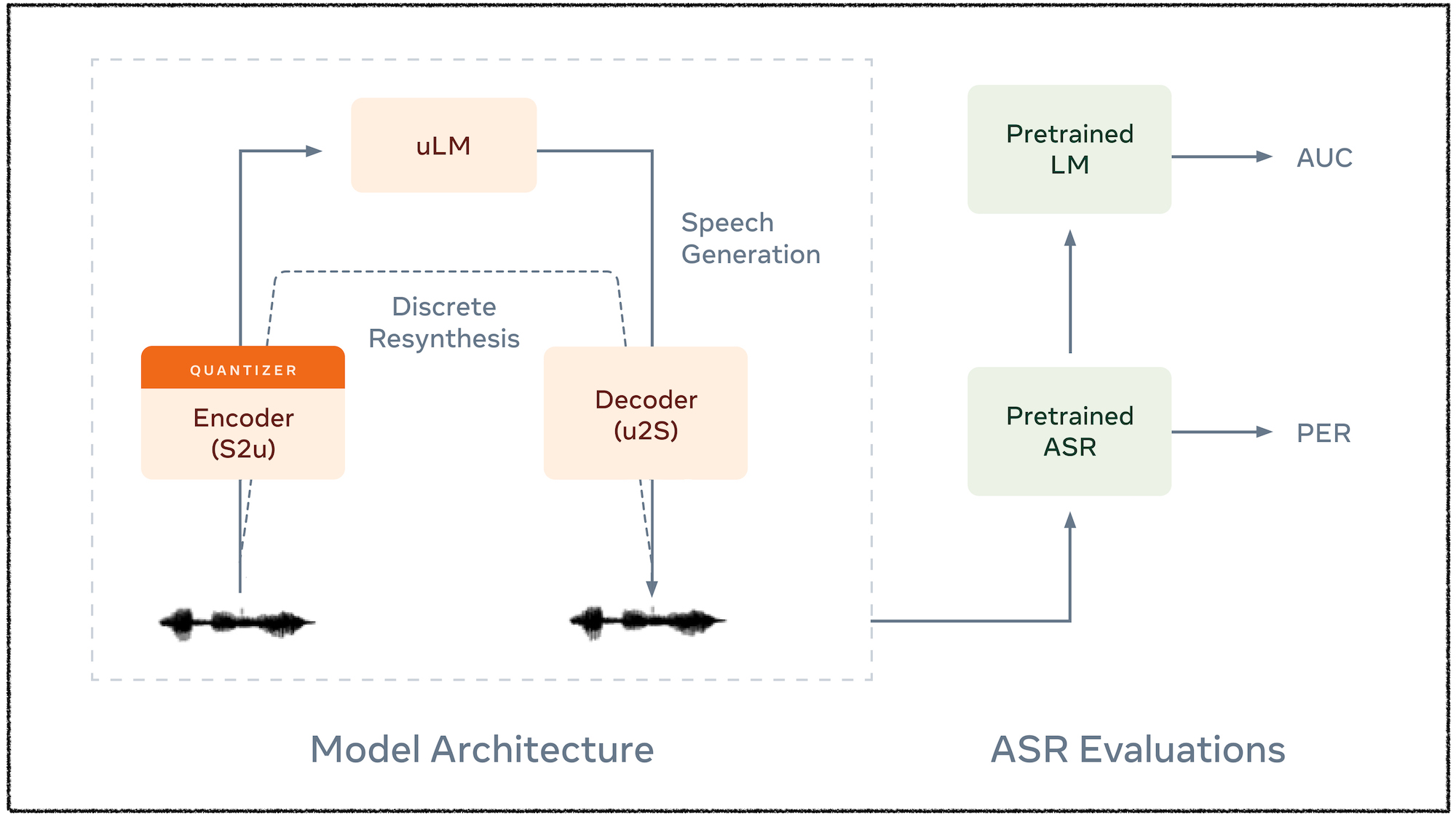

Adi Lab — Dr. Yossi Adi

Develops large language models for spoken audio and music, advancing speech recognition, enhancement, generation, and audio-based language modeling.

Lab Website ↗

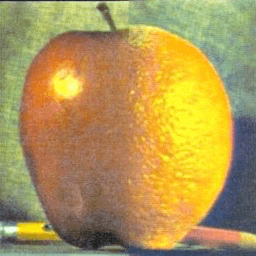

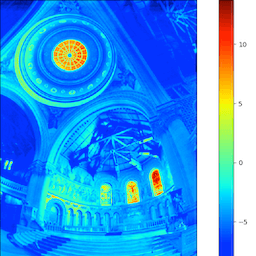

Fattal Lab — Prof. Raanan Fattal

Works on computational imaging and generative models for high-resolution image synthesis. Research areas include single image dehazing, deblurring, edge-aware processing, and efficient diffusion models for ultra-high resolution generation.

Lab Website ↗

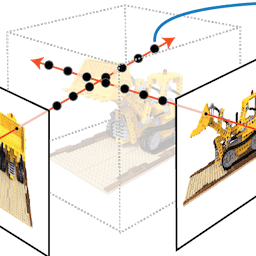

Benaim Lab — Dr. Sagie Benaim

Develops efficient methods for reconstructing, generating, and understanding dynamic 3D worlds. Research includes generative models for 3D scene editing, text-to-mesh generation, and controllable video synthesis.

Lab Website ↗

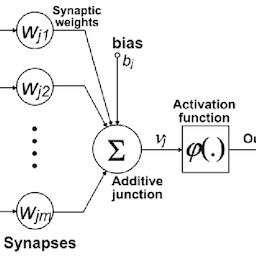

Werman Lab — Prof. Michael Werman

Applies deep learning to computer vision problems including novel network architectures, point cloud analysis, and medical image processing. Research also covers geometric algorithms and statistical methods for visual recognition.

Lab Website ↗

Weiss Lab — Prof. Yair Weiss

Research spans computer vision, probabilistic models, and Bayesian inference for human and machine perception. Also leads the TUM-HUJI AI Hub, a collaboration with the Technical University of Munich on AI research.

Lab Website ↗

Peleg Lab — Prof. Shmuel Peleg

Develops AI methods for video understanding, including video mosaicing, egocentric video processing, super-resolution, and video synopsis. Recent work focuses on speech enhancement from visual lip-movement cues, with a patent licensed to Google.

Lab Website ↗

Weinshall Lab — Prof. Daphna Weinshall

Investigates machine learning, active learning, and computer vision with connections to cognitive neuroscience. Research includes multi-annotator active learning, knowledge fusion and distillation to combat local overfitting, and visual recognition.

Lab Website ↗